Image converting is one of most important tasks, we have too many methods to do that. For example: convert type, bit depth, color space,… . Today, I’ll show you the way to convert image bit depth of a bit map image form 8bpp up to 24bpp (32 bpp or more with the same way).

Firstly, I think you’ve already known what is image bit depth, but I think I should better remind you that what it is exactly.

Bit depth refers to the color information stored in an image. The higher the bit depth of an image, the more colors it can store. The simplest image, a 1 bit image, can only show two colors, black and white. That is because the 1 bit can only store one of two values, 0 (white) and 1 (black). An 8 bit image can store 256 possible colors, while a 24 bit image can display about 16 million colors.

Along with an image’s resolution, the bit depth determines the size of the image. As the bit depth goes up, the size of the image also goes up because more color information has to be stored for each pixel in the image.

Secondly, If you wanna do something , you must know what it is. Wikipedia said:”

In computing, a bitmap is a mapping from some domain (for example, a range of integers) to bits, that is, values which are zero or one. It is also called a bit array or bitmap index.

In computer graphics, when the domain is a rectangle (indexed by two coordinates) a bitmap gives a way to store a binary image, that is, an image in which each pixel is either black or white (or any two colors) ”

The main task of this post is how to convert a bitmap image with 8bpp up to 24bpp and i think that’s enough. Now, Let’s start.

1. Bitmap Structure

Bitmap file in RAM or ROM contains a header, which should be omitted (we do not check it, assuming that the bitmap already has the required format). After the header there is data section, containing information on pixels’ colours. Single byte (8 bits) contains colour information on two pixels, 4 MSB concerns pixel on the left side, 4 LSB – pixel on the right side. Colour is encoded as a 4-bit address in colour table (which can be found in BMP header). The colour table used in the file from this exercise has the following structure (parameter Bits per pixel=4, NumColors=16)

The order of the pixels in BMP file is as follows: from left to right, from bottom to top (first pixel is from lower left corner of the picture). In the first approach, the picture can be displayed upside down, just to test the reading data from memory.

Each line is filled with zeros at the end, so each line has a length of multiple of 32 bits. In this example filling is not used, since each line has 256 pixels, i.e. exactly 32 groups of 32 bits.

2. The Color Table

If we are dealing with images having a bit depth of 8 or less, then the pixel data is actually an index into a color palette. For instance, in a 4-bit image there are a maximum of 16 colors in the palette. If the data for a particular pixel is, say, 9, then the color that is used for that pixel is given by the tenth entry in the table (because the numbering starts with 0 and not 1).

In all versions of BMP files starting with Version 3 (Win3x), the color entries occupy 4 bytes each so that they can be efficiently read and written as single 32-bit values. Taken as a single value, the four bytes are ordered as follows: [ZERO][RED][GREEN][BLUE]. Due to the Little Endian format, this means that the Blue value comes first followed by the green and then the red. A fourth, unused, byte comes next which is expected to be equal to 0.

If we are dealing with 16-bit or 32-bit images, then the Color Table contains a set of bit masks used to define which bits in the pixel data to associate with each color. Note that the original Version 3 BMP format did not support these image types. Instead, these were an extension of the format developed for WindowsNT. For both the 16-bit and the 32-bit variants, the color masks are 32-bits long with the green mask being first and the blue mask being last. In both cases the bit masks start with the most significant bits, meaning that for the 16-bit images the least significant two bytes are zero. The format requires that the bits in each mask be contiguous and that the masks be non-overlapping. The most common bit masks for 16-bit images are RGB555 and RGB565 while the most common bitmasks for 32-bit images are RGB888 and RGB101010.

If we are dealing with a 24-bit image, then there is no Color Table present.

3. The Pixel Data

The pixel data is organized in rows from bottom to top and, within each row, from left to right. Each row is called a “scan line”. If the image height is given as a negative number, then the rows are ordered from top to bottom.

In the uncompressed formats (including the color masked formats) each scan line is null-padded so as to occupy an integer number of dwords (4-byte words). In other words, the number of bytes needed to store each scan line must be an even multiple of four and, if necessary, null bytes (bytes whose values are zero) are appended to the end of the pixel data for that row in order to make this so. For instance, in the 24-bit format each pixel requires three bytes of data. If the image is 13 pixels wide then each row requires 39 bytes of data and a single null byte would be appended to bring this total to 40.

1-bit (Monochrome Bitmap)

Each byte of data represents 8 pixels. The most significant bit maps to the left most pixel in the group of eight. Any unused bits are set to zero.

4-bit (16 Color Bitmap)

Each byte of data represents 2 pixels. The most significant nibble maps to the left most pixel in the group of two. Any unused nibble is set to zero.

8-bit (256 Color Bitmap)

Each byte represents 1 pixel.

16-bit (Up to 65,536 Colors, but commonly 32,768 Colors)

Each pixel is represented by two bytes. A common representation is RGB555 which allocates 5 bits to each color allowing for 32K colors while leaving one bit unused. Since the eye is most sensitive to green, another common representation is RGB565 which allocates this unused bit to the green component

24-bit (16,777,216 Colors)

Each pixel is represented by three bytes. The first byte gives the intensity of the red component, the second byte gives the intensity of the green component, and the third byte gives the intensity of the blue component.

32-bit (Up to 4,294,967,296 Colors, but commonly 1,073,741,824 or 16,777,216 Colors)

Each pixel is represented by four bytes. Even though this means that over four billion colors could be represented, very few display devices are capable of such resolution. However, it can be desirable to store images with such high resolution so that significant processing can be performed on the data without significant degradation building up from accumulated round-off errors. Even so, it is generally sufficient to only maintain an additional two bits of information per pixel, so the RGB101010 representation allocates 10 bits to each color. Another common representation is the RGB888 which simply uses a 32-bit format to store 24-bit images in order to leverage the ability of the processor to work with data in 32-bit chunks more efficiently.

4. Conversion algorithm.

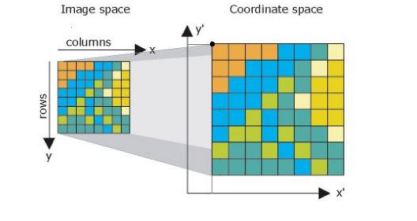

I think that we should focus on this section. The image bellow will show us how to do that.

4.1. Build FileHeader

4.1. Build FileHeader

InfoHeader block has 40 bytes, you can look at bitmap image structure. By the way, I’ll use Java programming language to do all tasks.

Our job here just is transfer original image (8bpp) header to new image (24bpp) and re-calculate some field ex: FileSize, DataOffset.

FileSize= 54 + Height*Width*3 + Height*Padding (bytes)

and

Padding= ((4 – Width* 3) mod 4) mod 4 (bytes)

with 24bpp bitmap image, DataOffset = 1024.

Because we write bit by bit by hand, so we have to convert ascii character to hex number. I wrote some methods like:

- privatebyte[] intTo2Byte(int x) {

- byte[] array = newbyte[2];

- array[1] = (byte) x;

- array[0] = (byte) (x >> 8);

- return array;

- }

- publicstaticbyte[] stringToByteArray(String str) {

- returngetBytes();

- }

- privatestaticbyte[] toByteArray(String input) {

- //to charArray

- char[] preBitChars =toCharArray();

- int bitShortage = (8 – (length % 8));

- char[] bitChars = newchar[length + bitShortage];

- System.arraycopy(preBitChars, 0, bitChars, 0, preBitChars.length);

- for (int i = 0; i < bitShortage; i++) {

- bitChars[length + i] = ‘0’;

- }

- //to bytearray

- byte[]byteArray = newbyte[length / 8];

- for(int i = 0; i <length; i++) {

- if(bitChars[i] == ‘1’) {

- byteArray[length- (i / 8) – 1] |= 1 << (i % 8);

- }

- }

- returnbyteArray;

- }

And the main method of this task:

- privatestaticvoid transferFileHeader(Image image) {

- //setup signature BM

- byte signature[] = stringToByteArray(“BM”);

- bytes[1] = signature[1];// B character

- bytes[0] = signature[0];// M character

- //setup file size in byte

- byte fileSize[] = intTo4Byte(getFileSize());

- // System.out.println(“byte 0:”+bytes[0]);

- bytes[5] = fileSize[0];

- bytes[4]= fileSize[1];

- bytes[3]= fileSize[2];

- bytes[2]= fileSize[3];

- //setup reserved =0 (4bytes)

- bytereserved[] = intTo4Byte(0);

- bytes[6]= reserved[0];

- bytes[7]= reserved[1];

- bytes[8]= reserved[2];

- bytes[9]= reserved[3];

- //setup dataOffset

- byteoffSet[] = intTo4Byte(getDataOffset());

- bytes[10]= offSet[3];

- bytes[11]= offSet[2];

- bytes[12]= offSet[1];

- bytes[13]= offSet[0];

- }

- privatestatic void setFileHeader(Image src, Image desc) {

- int paddedWidth = (4 – (getWidth() * 3) % 4) % 4;

- int fileSize = 54 + (getWidth() * src.getHeight() * 3) + src.getHeight() * paddedWidth;//padded la so byte can add them de row mod 4 =0

- //System.out.println(“FileSize=”+fileSize +” bytes.”);

- setSignature(src.getSignature());

- setFileSize(fileSize);//Ok

- setReserved(src.isReserved());

- setDataOffset(54);//14 bytes file header + 40 bytes infoheader

- //System.out.println(desc.getDataOffset());

- }

See you at the next step !